Docker containers enable developers and operators to build and ship an application anywhere. The author discusses the popularity of Docker container technology, and its future relevance and scope.

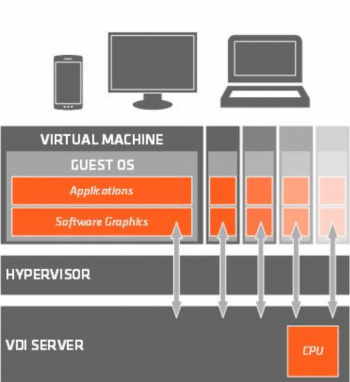

Virtualisation refers to the creation of a ‘virtual’ technology—an abstraction built to support a diverse set of applications and systems using the same base set of resources. Virtual machines (VMs) are the implementation of virtualisation for an entire machine. They emulate the hardware, networking and storage, providing an isolated system for the end user. A VM provides the complete set of functionality an operating system would have provided. Multiple VMs can be run on a single system, thus optimising the usage of system resources and reducing the need for separate hardware for each system. Specialised software, known as a hypervisor, is often used on servers to manage the different VMs. If you have surfed the Internet, you have probably been exposed to a virtual machine without realising it. That is the beauty of this technology!

The technology has, in fact, developed over a span of decades, starting with IBM’s first commercial mainframe that supported virtualisation, which evolved to address the demand by Bell Labs and the Massachusetts Institute of Technology in the 1960s.

Containers

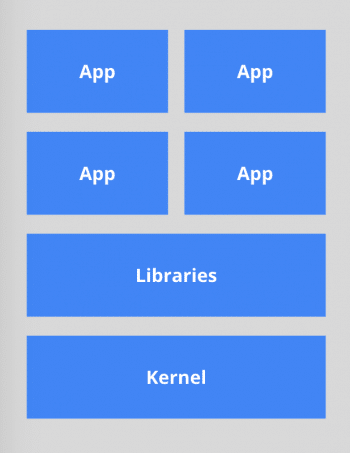

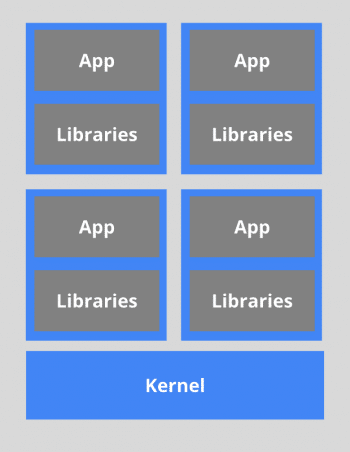

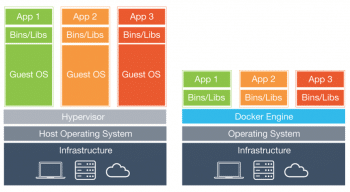

You’d think containers were a modern update to virtual machines, but the fact is that they date back to the 1970s, to the ‘chroot’ of UNIX machines (a command that defined a new root directory for the system), introducing the concept of isolating processes from each other without the need for hardware-level virtualisation. The Linux container was one of the first comprehensive implementations of the container ecosystem. A series of updates and several decades later, we have containers that are a speedier, more efficient construct, which use operating system-level virtualisation instead. Containers offer a number of advantages over virtual machines, an important one being that they are lightweight and easier to deploy at scale in cloud-based infrastructure.

Docker

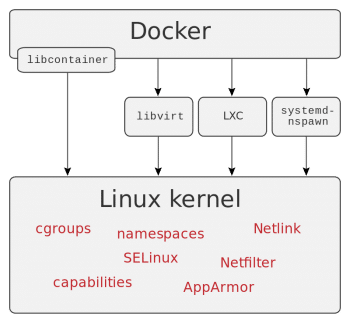

Docker is software that offers a means to develop and maintain containers. It primarily relies on the LXC interface and uses the concepts of groups and namespaces in Linux; however, the team has developed a programming interface called libcontainer, which is being used to develop current iterations.

The most important reason behind the high adoption rate of Docker is that it is lightweight. Docker images are much lighter to deploy than hypervisor-based systems like virtual machines. This also increases the density of containers (compared to VMs) that can be deployed on a host. And this means that the size of a container is a few megabytes versus the gigabytes of a VM, and the boot time is in seconds contrasted with the minutes it takes for even the faster VMs to launch.

Docker Hub has gone a step further by providing a complete ecosystem for sharing and distributing images as well as maintaining existing deployments.

Enterprise usage

The application life cycle is a labour-intensive circle of development, integration and testing. Having a containerised environment greatly simplifies the dependency management, scalability and deployment of applications, since the deployment environment can be made completely identical across development and production servers. This is why Docker’s simplification of complex development environments makes it a leading product across the industry.

Resource utilisation in Docker containers is maximised and isolation leads to better and more predictable system performance. Moreover, security is enhanced; even if a user is able to compromise the system and gain superuser privileges, this user is isolated from the rest of the system. Portability to many operating systems and the version control feature make the technology an attractive package for widespread adoption across the industry.

Rapid adoption

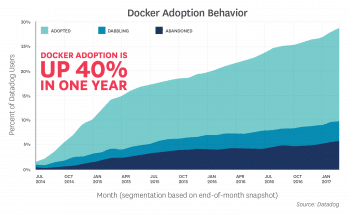

Docker has fast become the industrial standard for containerisation, with a 40 per cent increase in adoption across all hosts, claimed a study published by the leading metrics and monitoring provider, Datadog. The study further claimed that there is a linear increase in the containers deployed by users, with the numbers nearly quintupling in the first 10 months of being introduced to containers. The major trend observed has been the deployment of several containers simultaneously on a single host.

In a similar study, leading analytics provider Sysdig claims to have observed as many as 95 containers running on a host. A majority of the hosts used Kubernetes as an orchestrator to manage these containers, while maintaining a registry of containers to enable easy sharing between users and services.

The future

An analysis of current trends

The DockerCon 2016 keynote speech by Docker’s CEO Ben Golub provided many insights into the current level adoption of this technology:

- Dockerised applications have grown about 3100 per cent over the past two years to about 460,000 in number.

- Over 4 billion containers have been shared among the developer community. Analysts are touting 8 billion as the number for 2017.

- There are nearly 125,000 Docker Meetup members worldwide, which is nearly half of the population of Iceland.

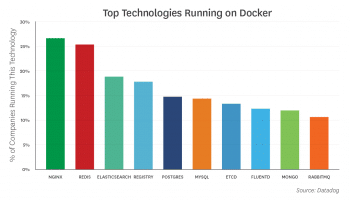

Docker has spurred the development of a number of projects including CoreOS and Red Hat’s Project Atomic, which are designed to be minimalist environments for running containers. The top 10 technologies running in Docker environments, as listed by Datadog, are shown in Figure 7.

Projections

What began with 1146 lines of code has today turned into a billion-dollar product. Docker has grown to a stage where a majority of leading tech firms have been motivated into releasing additional support for deploying containers within their products. Examples include Amazon integrating Docker into the Elastic Beanstalk system, Google introducing Docker-enabled ‘managed virtual machines’, and announcements from IBM and Microsoft with regard to Kubernetes support for multi-container environments. Docker has already become a part of major Linux distributions such as Ubuntu, CentOS and Red Hat Enterprise Linux (RHEL), although the packaged versions often fail to keep up with the latest releases.

The Docker team has clearly laid out its goals of developing the core capabilities, cross-service management and messaging. It is likely that, in the future, there will be more focus on building and deploying rather than the level of virtualisation, which is when the fine line between VMs and containers becomes fuzzy.

In any case, the fact that Docker has breached the billion-dollar evaluation mark is an indication of the underlying potential of this technology. Docker is clearly here to stay!