This article looks at the backbone technologies in cloud computing, and suggests some areas of research in this field as well as a few resources

Cloud computing is one of the emergent domains in which remote resources are used on the basis of demand, even without the physical infrastructure at the client end. In cloud computing, the actual resources are installed and deployed at remote locations. This article focuses on the guidelines for research scholars and practitioners in the domain of cloud computing and related technologies.

Backbone technologies in cloud computing

Virtualisation

Virtualisation is the major technology that works with cloud computing. An actual cloud is implemented with the use of virtualisation technology. In cloud computing, dynamic virtual machines are created to provide access to actual infrastructure to an end user or developer at another remote location.

A virtual machine or VM is the software implementation of any computing device, machine or computer that executes the series of instructions or programs as a physical (actual) machine. When a user or developer works on a VM, the resources, including all programs installed on the remote machine, are accessible using a specific set of protocols. Here, for the end user of the cloud service, the VM acts like the actual machine.

Prominent virtualisation software

Prominent virtualisation software on Windows and Linux host OSs are:

Windows as the host OS

- VMware Workstation (any guest OS)

- Virtual Box (any guest OS)

- Hyper-V (any guest OS)

Linux as the host OS

- VMware Workstation

- Microsoft Virtual PC

- VMLite Workstation

- VirtualBox

- Xen

A hypervisor or virtual machine monitor (VMM) is a piece of computer software, firmware or hardware that creates and runs virtual machines. A computer on which a hypervisor is running one or more virtual machines is defined as a host machine. Each virtual machine is called a guest machine. The hypervisor presents the guest OSs with a virtual operating platform and manages their execution. Multiple instances of a variety of OSs may share virtualised hardware resources.

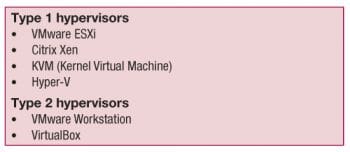

Hypervisors of Type 1 (bare metal installation) and Type 2 (hosted installation)

Type 1 hypervisors are used in the implementation and deployment of cloud services, and they are associated with the concept of bare metal installation. This means there is no need of any host operating system to install the hypervisor. By using this technology, there is no risk of corrupting the host operating system. These hypervisors are directly installed on the hardware without the need for any other operating system. On this hypervisor, multiple virtual machines are created.

A Type 1 hypervisor is a client hypervisor that interacts directly with hardware that is being virtualised. It is completely independent from the operating system, unlike a Type 2 hypervisor, and boots before the OS. Currently, Type 1 hypervisors are being used by all the major players in the desktop virtualisation space, including but not limited to VMware, Microsoft and Citrix.

Type 2 (hosted) hypervisors execute within a conventional operating system environment. With the hypervisor layer as a distinct second software level, guest operating systems run at the third level above the hardware. A Type 2 hypervisor is a type of client hypervisor that sits on top of an operating system. Unlike Type 1, a Type 2 hypervisor relies heavily on the operating system. It cannot boot until the operating system is already up and running and, if for any reason the operating system crashes, all end users are affected. This is a big drawback of Type 2 hypervisors, as they are only as secure as the operating system on which they rely. Also, since Type 2 hypervisors depend on an OS, they are not in full control of the end users machine.

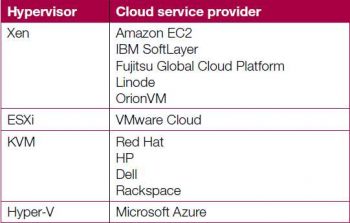

Hypervisors in the industry

The table below gives a few examples of hypervisors and the corresponding cloud service provider companies.

Cloud service providers and what they offer

Currently, there are a number of cloud service providers in the global market. Here are a few of them in the storage domain:

- JustCloud

- Zipcloud

- Dropbox

- Zoolz

- Livedrive, etc.

Research areas in cloud computing

Cloud computing and related services are very frequently taken up for further research by scholars as well as academicians. As cloud services have a number of domains, deployment models and respective algorithmic approaches, there is a huge scope for research.

The following topics offer a lot of scope for research scholars in the cloud infrastructure domain:

- Energy optimisation

- Load balancing

- Security and integrity

- Privacy in multi-tenancy clouds

- Virtualisation

- Data recovery and backup

- Data segregation and recovery

- Scheduling for resource optimisation

- Secure cloud architecture

- Cloud cryptography

- Cloud access control and key management

- Integrity assurance for data outsourcing

- Verifiable computation

- Software and data segregation security

- Secure management of virtualised resources

- Trusted computing technology

- Joint security and privacy-aware protocol design

- Failure detection and prediction

- Secure data management within and across data centres

- Availability, recovery and auditing

- Secure computation outsourcing

- Secure mobile cloud

Problem formulation for R&D in cloud computing

Problem formulation is the first step that needs to be taken to work on any research task, whether it is related to a thesis or a corporate research project. Once the problem is found after investigating different research papers and media reports, the work can be started.

First, research scholars or academicians need to focus on their own interests and skills. If a researcher is very good in mathematical modelling, then the research project selected ought to be in cloud cryptography, power optimisation, energy optimisation, security or any topic that has mathematical functions. In case of a strong background in algorithm design, research work can be done in scheduling, trusted architectures, load balancing or related fields. It often happens that the research topic is selected without analysing ones own expertise and interests.

Once the research topic is selected, then there is the task of literature survey, without which no genuine research work can be done. In this process, research papers from prominent international journals are analysed in relation to the specific area that is selected for research. If the topic is energy optimisation, then the research papers should be related to this particular topic in the domain of cloud computing.

Its important to specify here that we should try to get all the research papers from journals related to the topic being researched rather than from journals of all streams. If the researcher is working on cloud computing, then the papers should be taken from reputed journals in the cloud computing domain rather than the classical journals of computer science.

The specific international journals of cloud computing include:

- Journal of Cloud Computing SpringerOpen Journal

- IEEE Transactions on Cloud Computing

In case other international journals covering cloud computing are consulted, integrity in terms of indexing should be verified very carefully. Research papers carried in Science Citation Indexed (SCI) Journals should be verified from the URL http://ip-science.thomsonreuters.com/cgi-bin/jrnlst/jloptions.cgi? PC=K of Thomson Reuters to avoid any problems. Currently, many universities strictly direct their research scholars to publish their papers only in SCI Journals.

International journals that have indexing with SCI and Scopus should be analysed. Nowadays, many international journals claim to have SCI, SCI-Expanded or Scopus indexing, but the researcher should check their authenticity. It is also suggested that researchers check the validity of the Impact Factor from the website of Thomson Reuters.

After detailed study of the research papers from the latest journals, the problem or gap in the classical algorithms is pointed out. In every research paper, the algorithm, results and conclusion, as well as the future work gives an idea of how the proposed work can be improved. The algorithm mentioned in the research paper can be integrated with any meta heuristic technique, including Simulated Annealing, Ant Colony Optimisation, the HoneyBee Algorithm or any other. The meta heuristic techniques can give effective results from any classical algorithm mentioned in the base paper.

Once the problem is found, the synopsis or research proposal is prepared, which is one of the most important tasks in the whole research path, and hence should be done very carefully. Generally, the synopsis is written by the research scholars or supervisors in a very haphazard and careless manner. But once the synopsis is approved by the university, it becomes very dangerous if the students or guides themselves are not too clear about all the points mentioned in the research proposal. The research committee of the university focuses on the objectives and hypothesis before approving the synopsis. Students or guides should draft hypotheses and specify the objectives of the research with utmost care. Generally, students/guides give very hypothetical objectives to impress the research committee and after approval of the synopsis, it might become very difficult to achieve all the grand objectives mentioned in the research proposal, in order to complete the degree.

Simulation of a research solution in cloud simulators

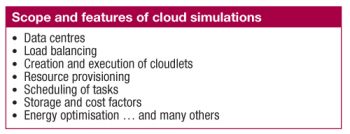

Cloud service providers charge their clients depending upon the space or service provided to them. In R&D, it is not always possible to have the actual cloud infrastructure for performing the experiments. For any research scholar, academician or scientist, it is not feasible to hire cloud services and then execute their algorithms or implementations, every time. For the purpose of R&D and testing, open source libraries are available, using which, the feel of cloud services and executions can be experienced. Nowadays, in the research market, cloud simulators are widely used by research scholars and practitioners without paying fees to any cloud service provider.

Using cloud simulators, researchers can execute their algorithmic approaches on a software-based library and can get the results for different parameters including energy optimisation, security, integrity, confidentiality, bandwidth, power and many others.

Tasks performed by using cloud simulators

Cloud simulators help to perform the following tasks:

- Modelling and simulation of large scale cloud computing data centres

- Modelling and simulation of virtualised server hosts, with customisable policies for provisioning host resources to virtual machines

- Modelling and simulation of energy-aware computational resources

- Modelling and simulation of data centre network topologies and message-passing applications

- Modelling and simulation of federated clouds

- Dynamic insertion of simulation elements, stopping and resuming of simulation

- User-defined policies for allocating hosts to virtual machines, and policies for allocation of host resources to virtual machines

Where could I get some complete research paper on specific topic of cloud computing?

Really its an nice article. Helpful for the begineers.